Policy Pulse - Issue #9 | Week of April 6, 2026

OWASP Agentic AI Top 10 redefines the disclosure landscape. Plus: OpenAI launches safety bug bounty, Langflow exploited in 20 hours, and GSA drops the first federal AI acquisition clause.

Your weekly briefing on cybersecurity policy affecting vulnerability disclosure and security research.

Top Story

OWASP Drops the Top 10 for Agentic AI: A New Attack Surface Demands New Disclosure Frameworks

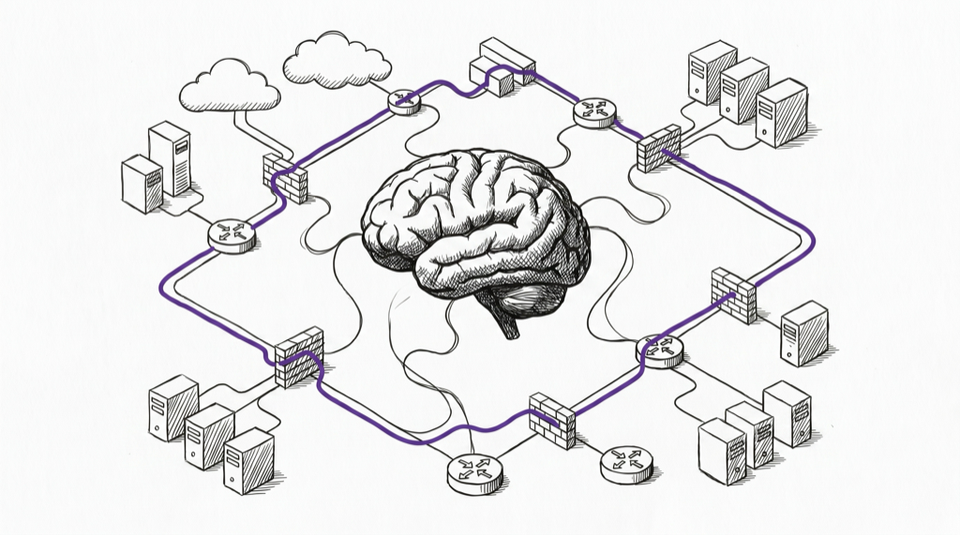

The OWASP Top 10 for Agentic Applications (2026), peer-reviewed by more than 100 security researchers and practitioners, is now the definitive risk catalog for autonomous AI systems. Unlike traditional web app risks, these cover AI agents that call APIs, execute code, move files, and make decisions with minimal human oversight. Agent Goal Hijacking (ASI01) tops the list, where poisoned inputs redirect an agent to perform harmful actions using its legitimate tools and access. Tool Misuse (ASI02) follows, covering agents that invoke tools in ways their designers never intended.

A Dark Reading poll found 48% of cybersecurity professionals now rank agentic AI as the number-one attack vector heading into 2026, outranking deepfake threats, board-level cyber recognition, and passwordless adoption. Yet only 34% of enterprises have AI-specific security controls in place.

For the VDP community, the implications are significant. Traditional disclosure programs were built for software vulnerabilities with clear reproduction steps and deterministic behavior. Agentic AI introduces probabilistic failures, multi-turn attack chains, and context-dependent exploits that existing VDP templates struggle to capture. The OWASP framework provides the taxonomy. The question is whether programs will adopt it before the next wave of AI agent exploits outpaces their capacity.

Why it matters for VDP: Disclosure programs need to evolve their intake forms, triage criteria, and severity models to handle AI-specific vulnerabilities. The OWASP Agentic Top 10 and CSA's 62-page Agentic AI Red Teaming Guide give researchers and program operators a shared language for this new class of reports.

Upcoming Deadlines & Events

| Date | Event | Action |

|---|---|---|

| Apr 8 | CISA KEV remediation deadline for Langflow CVE-2026-33017 | Check KEV catalog |

| Apr 24 | NIST NCCoE DevSecOps Practices comment period closes | Submit comments |

| May 1 | DEF CON 34 Policy Track CFP deadline | Submit a talk |

| May 5 | CISA BOD 26-02 first milestone: edge device inventory due | BOD 26-02 details |

| May 6 | NIST SP 1347 (CSF 2.0 Quick-Start Guide) comment period closes | Submit comments |

| Aug 2 | EU AI Act high-risk AI system requirements take effect | EU AI Act text |

| Sep 11 | EU CRA mandatory vulnerability reporting begins | CRA reporting guidance |

This Week in Policy

AI & Emerging Tech Security

-

OpenAI Launches Safety Bug Bounty on Bugcrowd: OpenAI launched a dedicated "Safety Bug Bounty" program, separate from its existing security bounty. The new program accepts reports on AI abuse and safety risks, including third-party prompt injection, data exfiltration, and disallowed actions by agentic products, even when they don't qualify as traditional security vulnerabilities. Rewards reach $20,000 for high-severity reproducible issues. This is the first major AI company to formalize a distinct bounty track for AI safety issues, creating a model other AI labs may follow. (OpenAI | SecurityWeek)

-

Langflow Exploited Within 20 Hours of Disclosure, Added to CISA KEV: CVE-2026-33017, a critical unauthenticated RCE in the Langflow AI pipeline builder (CVSS 9.3), was actively exploited within 20 hours of the advisory. The flaw allows arbitrary Python code execution via a single HTTP request with no authentication. CISA added it to the Known Exploited Vulnerabilities catalog with a remediation deadline of April 8. Notably, Langflow's previous CVE (CVE-2025-3248) was also added to the KEV in May 2025, making this the second time an AI development tool has appeared in the catalog in under a year, underscoring that AI infrastructure is now a persistent attack surface. (Sysdig)

-

CSA Launches CSAI Foundation for Agentic Security: The Cloud Security Alliance launched the CSAI Foundation on March 23, focused on securing the "agentic control plane." This complements CSA's Agentic AI Red Teaming Guide, which covers 12 threat categories including supply chain attacks, permission escalation, multi-agent collusion, and memory poisoning. (CSA)

Federal Strategy & Regulation

-

White House AI Framework Calls for Federal Preemption of State AI Laws: The Trump administration released a National Policy Framework for Artificial Intelligence recommending Congress preempt state AI laws. The framework argues states should not regulate AI model development or penalize developers for third-party misuse. Over 50 Republican state legislators pushed back, urging the administration to respect federalism. The framework is non-binding, and Congress has so far rejected preemption proposals. For the VDP community, the preemption question matters: if vulnerability disclosure requirements for AI systems are set federally, it simplifies compliance but may override stronger state protections. (Roll Call)

-

GSA Issues First Federal Acquisition Clause for AI Systems: The General Services Administration published the first federal acquisition regulation clause specifically for AI, imposing requirements around government data ownership, "American AI Systems" mandates, and incident reporting protocols for government AI procurement. This creates a new compliance surface for AI vendors selling to the federal government. (National Law Review)

CVE & Vulnerability Programs

- Mozilla Redefines Bug Bounty Scope for Sandbox Escapes: Firefox's bug bounty now limits "Highest Impact" sandbox escape rewards to attacks compromising the parent process only. Graphics stack vulnerabilities are assessed in their sandboxed context. Memory-reads and cross-process exploits targeting other sandboxed processes are excluded from top-tier rewards. A new 7-day internal review window applies before researcher submissions are formally considered. The change signals a maturation of browser bounty programs toward more precise threat modeling. (Attack & Defense)

International Developments

-

Budapest Convention Gains Third Ratification: Hungary became the third country to ratify the Second Additional Protocol on February 5, 2026. The protocol enables direct cooperation with service providers across jurisdictions and rapid collaboration in emergency situations, relevant for cross-border vulnerability disclosure coordination. (Council of Europe)

-

Germany NIS2 Registration Deadline Has Passed: Essential and Important entities in Germany were required to register with the BSI by March 6, 2026 under NIS2 transposition. Organizations that missed the deadline face enforcement risk. The EU Commission also proposed targeted amendments to NIS2 in January to simplify compliance, and first compliance audits for operators of critical facilities are expected by mid-2026. (NIS2 Tracker)

Legal & Researcher Protections

- Two Cybersecurity Professionals Sentenced for BlackCat Ransomware: Ryan Goldberg and Kevin Martin, both cybersecurity industry workers, pleaded guilty to conducting ALPHV/BlackCat ransomware attacks against U.S. victims, extorting approximately $1.2M in Bitcoin. They face up to 20 years in prison. The insider-threat angle, security professionals turned attackers, underscores the importance of clear ethical boundaries and legal frameworks that distinguish legitimate research from criminal activity. (DOJ)

Worth Reading

-

The OWASP Agentic Top 10 2026: What It Means for AI Agents and Non-Human Identities (Entro Security): Why traditional security models fail for autonomous AI agents and what the OWASP framework means for security operations.

-

How AI Red Teaming Evolves with the Agentic Attack Surface (Palo Alto Networks): Maps the shift from testing LLMs for harmful content to testing autonomous agent chains, with practical implications for VDP programs covering AI systems.

-

EU Cyber Resilience Act: Preparing Your VDP for 2026 Reporting Requirements (HackerOne): Step-by-step guide for mapping disclosure programs to CRA compliance, with specific workflow recommendations for September readiness.

-

The White House Legislative Recommendations: National Policy Framework for Artificial Intelligence (Ropes & Gray): Legal analysis of the preemption framework and what it means for the patchwork of state AI regulation.

Policy Pulse is a weekly bulletin from disclose.io. Keeping the security research community informed on policy that affects our work.

Have a tip or want to contribute? Reply to this email, reach out on Twitter/X, or drop a comment here!