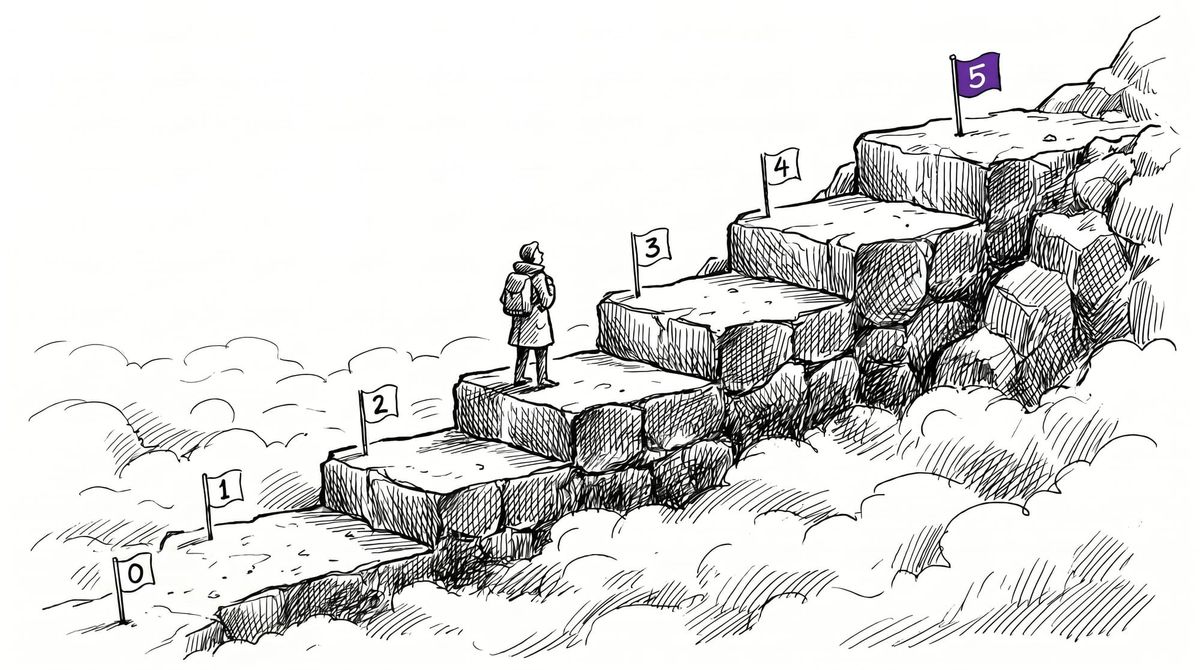

The disclose.io Maturity Model: a six-level ladder for vulnerability disclosure programs

The disclose.io Maturity Model is a six-level ladder — from 'no contact' to 'full safe harbor with CVD' — that underpins every entry in the new directory. Here's how it works, and who it's for.

When a researcher finds something broken, the next decision isn't technical — it's a judgment call about the organization on the other side. Will they pick up the phone? Will they thank you and fix it? Will they send a lawyer?

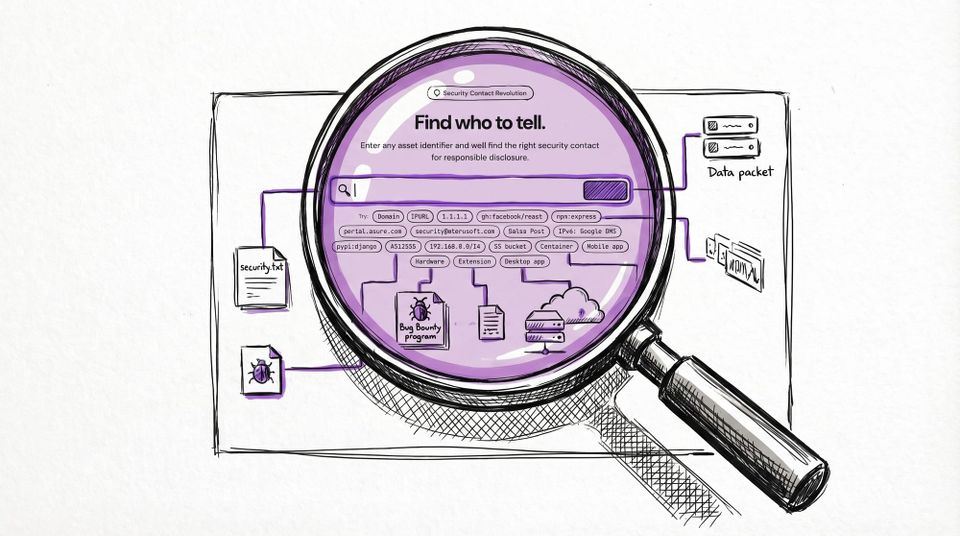

For most of the last two decades, the only way to answer those questions was experience, rumor, and the occasional horror story. The new directory on disclose.io is an attempt to replace that with something structured — a single place to look up a program and see, at a glance, how prepared it is to receive a report.

What makes that possible is a small, opinionated piece of framework we call diostatus — the disclose.io Maturity Model.

One line

Findable → Communicating → Not hostile → Explicitly safe → Accountable.

Every vulnerability disclosure program sits somewhere on that arc. The model's job is to name where, using plain English, so that both sides of a disclosure — the researcher with the finding, and the organization that needs the finding — have a shared vocabulary.

The six levels

| Level | Name | Key signal | What a researcher gets |

|---|---|---|---|

| 0 | Not Present | No contact, no policy | Nothing. No path in. |

| 1 | Contact Only | security.txt or intake method exists |

A way to reach someone, but no definition or protection. |

| 2 | Basic VDP | Public policy + channel | Documented process. Still no legal protection. |

| 3 | Partial Safe Harbor | Won't pursue legal action | You can report safely. Testing is a different question. |

| 4 | Full Safe Harbor | Explicit authorisation + carve-outs from anti-hacking laws | You can test safely, within scope. |

| 5 | Full Safe Harbor + CVD | Level 4 plus a coordinated disclosure timeline | Accountability on both sides — you know when a fix lands. |

Each level builds on the one below. You can't skip — a program doesn't move from "no contact" to "full safe harbor" without passing through the intermediate states, because the intermediate states are what make the top of the ladder real.

You can read the full level-by-level breakdown in the maturity framework documentation.

Why a ladder, not a label

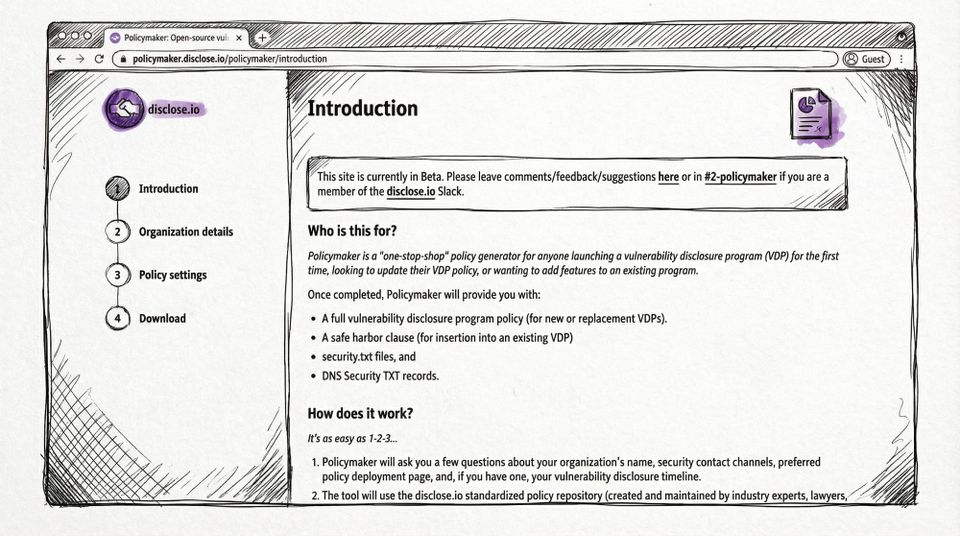

The older version of this project leaned on a binary: does a program have safe harbor language, yes or no? That was useful for adoption surveys, but it obscured the hard part — most programs aren't anywhere near safe harbor yet, and the gap between "has a security.txt" and "has bilateral safe harbor with a coordinated disclosure clause" is huge.

A six-level ladder makes two things possible:

- A researcher can triage a program and make a judgement call on how to communicate and collaborate with them in a few seconds. Level 0 or 1 is "probably don't" territory. Level 2 is "document everything and proceed carefully." Level 3+ is where meaningful collaboration starts.

- An organization can see the next step, not just the end state. Going from Level 1 to Level 2 is a weekend of work. Going from 2 to 3 is a legal conversation. Each transition has a specific shape, and the ladder turns an overwhelming "do a VDP" into an incremental path.

How this shows up in the directory

The directory is the model in action. Every entry is tagged with its current maturity level, and those ratings surface inline — you don't have to click through to find out whether a program has safe harbor language, because the level tag tells you.

Under the hood, the ratings come from the same community-maintained data that used to live in the diodb repository, as well as data pipelines that hunt the Internet for security.txt and DNS Security Txt records. The difference is the surface area: instead of scrolling a GitHub README, you filter. Sort by level. Search by vendor. Find programs at Level 4 or above if that's your comfort bar. Find Level 1 programs if you want to contribute triage reports and help move them up.

If you see a rating that doesn't match reality — a program listed at Level 2 that actually has safe harbor, or a Level 4 that just quietly dropped its anti-circumvention carve-out — every entry has an edit link that goes straight to the underlying record. The model only works because the data underneath it is honest, and the data underneath it is only honest because researchers and program owners keep it that way.

Who the model is for

There are two audiences, and the model is designed to serve them differently.

For researchers, the ladder is a triage tool. It's the answer to "should I engage with this program, and on what terms?" before you commit time to a finding. Level 0 and 1 programs aren't off-limits — sometimes they're the most important places to report, because that's where lives and infrastructure are actually at risk — but you should go in with both eyes open, document everything, and consider whether a third-party intermediary is the right path. Level 3+ programs are where you can spend your effort without carrying the legal risk yourself.

For organizations, the ladder is a roadmap. Most VDPs are not built in a single sprint — they accrete over quarters, as internal security, legal, and comms teams align on what "accepting external reports" actually means. The maturity model's job is to make that accretion legible. You can point at Level 2 and say we're here without pretending you're at Level 5. You can set a goal of moving to Level 3 this quarter. You can show leadership exactly what the next step costs — because each transition has a clear, bounded scope.

Both audiences benefit from the same underlying mechanic: positive reinforcement. The model is not a scorecard for shaming laggards. It's a climb. Every step up is visible, every step up is rewarded with better researcher engagement, and every step up makes the next step easier.

That's the philosophy behind disclose.io in general — secure easy, insecure obvious — and the maturity model is where that philosophy gets a shape you can actually point at.

What's next

The directory launched with maturity ratings for every program in the existing dataset, which is thousands of entries. Coverage is good but not perfect.

The long-term bet is that once the ladder is legible, programs will move up it. We've already seen that dynamic inside bug bounty platforms — when a visible, shared standard exists for "this is what good looks like," the market ratchets toward it. The maturity model has been around for many years now, but is the first public version of that standard that attempts to cover the full landscape, not just the programs that have already opted in to a platform.

If you want to see the model applied in the wild, start at the directory and browse. If you want to understand the philosophy and how the levels are defined in detail, the maturity framework page has the long-form version.

And if you want to help move a program up the ladder — yours, or someone else's — the contribution links are everywhere!